Most SEO teams I talk to describe the same strange pattern. Rankings look fine. Traffic has slipped a little, but nothing dramatic. And yet pipeline from organic has quietly eroded over the last two quarters, and nobody can point to the specific page that broke.

What broke is not a page. It is the retrieval layer. When a prospect asks ChatGPT "What is the best tool for X?", an answer gets generated from a blend of the model's training data and a live web search. Three or four sources get cited. If you are not one of them, the prospect never sees you, even if you still rank number one on Google for the same underlying keyword.

AI search engine optimization is the discipline of earning those citations. It is not a replacement for SEO. It is a new layer that sits on top, with different success metrics and different tactics. This guide walks through how to audit your current AI visibility, fix the technical and content gaps that keep you out of answers, measure what it is worth, and prioritize the work so it actually gets done.

Key Takeaways

-

AI search engine optimization is about earning citations inside AI-generated answers, not chasing a ranked position

-

Models pick sources using E-E-A-T signals, structured data, and the ability to extract a clean, self-contained answer

-

You cannot improve what you do not measure — start by benchmarking your AI Share of Voice across ChatGPT, Perplexity, and Google AI Overviews

-

Traditional SEO foundations still matter: indexing, authority, and technical health are now the price of entry

-

The fastest wins come from optimizing a single high-value page, proving the playbook works, and scaling from there

Why traditional SEO is no longer enough

For twenty years the deal was clear. Rank a page, earn a click, get a shot at converting the visitor. That loop is breaking in two directions at once.

First, AI answer engines now intercept queries before they reach a SERP. A buyer asks ChatGPT for "CRMs for small insurance agencies" and gets a named shortlist in ten seconds. The click never happens on Google. Second, Google itself is surfacing AI Overviews on a growing share of commercial queries, and those summaries are pulling meaningful traffic away from the organic blue links. When a user's question is already answered above the fold, the incentive to scroll evaporates.

The practical consequence: you can be "winning" at SEO and still losing the account. Your page can rank in the top three, be crawled, be indexed, be linked to, and still be invisible to the buyer who started with an AI prompt.

Redefining what a "win" looks like

The old scoreboard tracked rankings, clicks, sessions, and conversions. That scoreboard still matters, but it is incomplete. On the AI layer, the scoreboard is different:

-

Citations. How often does your domain or brand name appear inside an AI-generated answer to a target prompt?

-

Mention share. When competitors get cited for the same prompt, what percentage of the named sources are you?

-

Answer position. Are you the first source mentioned (high weight), or the fifth (barely read)?

-

Consistency. Are you cited reliably across ChatGPT, Perplexity, Claude, and Google AI Overviews, or only in one of them?

Think of these the way you used to think about SERP rank position. If you are not measuring them, you have no idea whether your content is working in the new retrieval layer.

From traditional SEO to AI SEO

Most of the mental model carries over. Authority still matters. Structured content still matters. Fast, indexable pages still matter. What changes is what you are optimizing toward.

| Focus area | Traditional Google SEO | AI search engine optimization |

|---|---|---|

| Primary goal | Rank a page high enough to earn a click | Get the brand cited inside the AI answer |

| Success metric | Rankings, CTR, organic sessions | Citation count, mention share, answer position |

| Content shape | Keyword-targeted, long-form pages | Chunked, self-contained factual blocks |

| Authority signal | Backlinks and domain score | Backlinks, third-party mentions, and corroboration across sources |

| Technical floor | Indexable, fast, crawlable | Indexable, fast, crawlable, with structured data and no content hidden in JavaScript |

| Feedback loop | Search Console query data | Prompt-level mention tracking across models |

The overlap on the left half of that table is the reason most teams can extend into AI SEO without hiring a new specialist. You already have the craft. You just need to point it at a different target.

From keywords to concepts

Traditional SEO rewards teams who own a keyword. AI SEO rewards teams who own a concept. A model answering "what is the best tool for onboarding remote designers" does not run a keyword match against your page. It retrieves candidate passages, compares them semantically, and picks the one that most cleanly represents the answer. If your entire positioning is "onboarding software," you are one of fifty generic options. If your positioning is "onboarding for remote design teams," you are a candidate for exactly that concept.

This is why generic value-prop language hurts you in AI answers. "Powerful, all-in-one, easy to use" is not a concept an AI can extract and attribute to you. A specific claim — "the only onboarding tool with Figma-native comment threads" — is something the model can quote and tie back to your domain.

How AI models decide which content to cite

AI models do not roll dice. Their citation behavior is an emergent consequence of how they were trained and how their retrieval stack is built. Once you see the inputs they weigh, the work you need to do gets obvious.

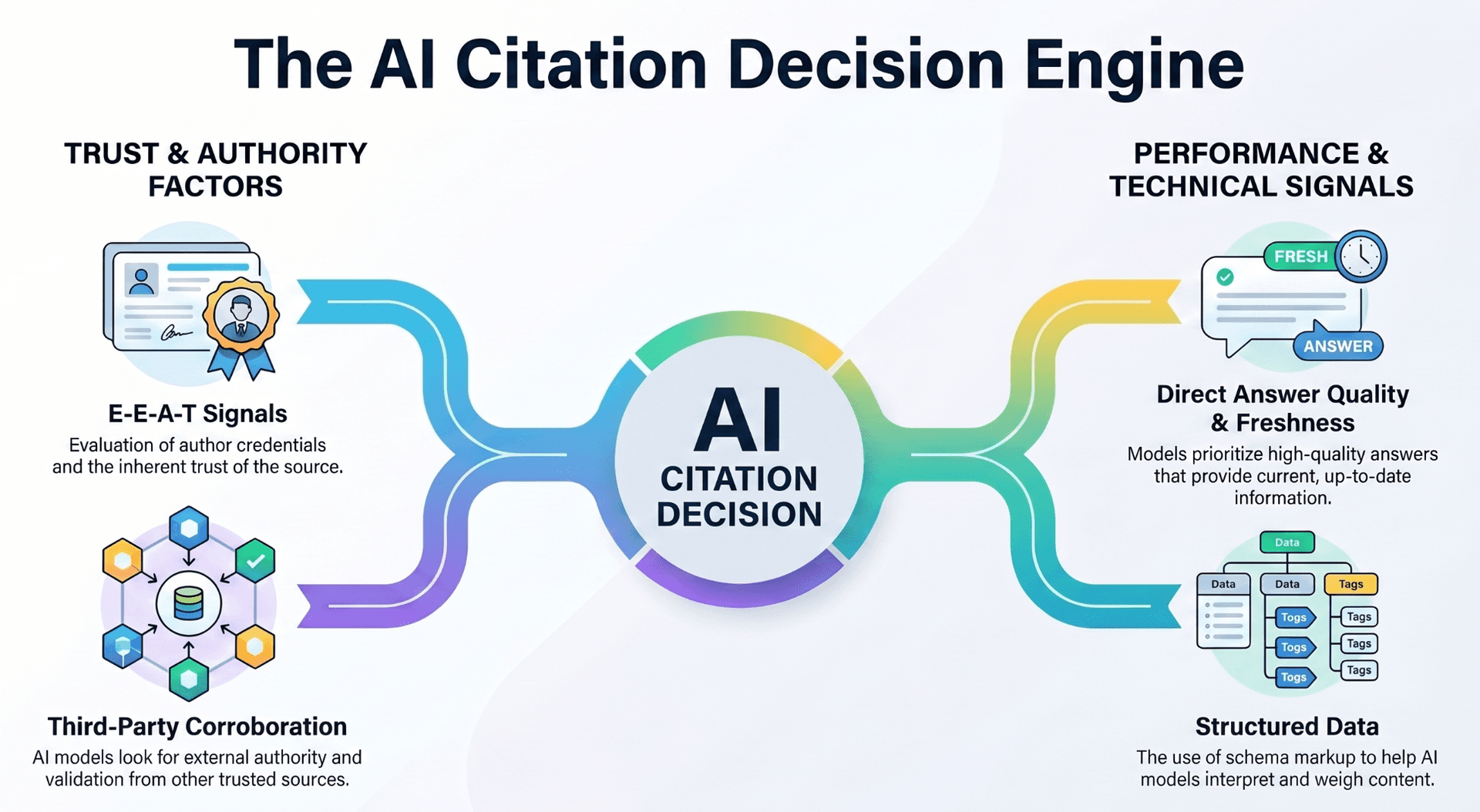

At a high level, the decision comes down to three inputs: does this source look trustworthy, does this content look extractable, and does this passage directly answer the prompt that was asked. If all three are yes, the model pulls you in. If any one is weak, you get skipped in favor of a competitor that looks cleaner on that axis.

E-E-A-T is now the price of entry

Google introduced E-E-A-T — Experience, Expertise, Authoritativeness, Trustworthiness — as a quality framework for human evaluators. LLM-based retrieval systems have absorbed a version of the same idea. They are explicitly engineered to prefer sources that look credible, because a model that cites sketchy content generates sketchy answers and quickly loses user trust.

What E-E-A-T signals look like in practice:

-

Named authors with real credentials. A bylined article from a domain expert with a linked LinkedIn profile carries more weight than anonymous marketing copy.

-

Consistent brand mentions across the web. If your company is referenced by five independent industry sites, the model treats that as consensus. Research from Profound Strategy found that brands are roughly 6.5x more likely to be cited by AI models from third-party sources than from their own domain.

-

Published, citable evidence. Original research, survey data, benchmark results, case studies. If you are the only source for a data point, the model has to cite you when referencing it.

-

Editorial backlinks from relevant sites. Links from your niche still function as authority votes. AI retrieval stacks overlap heavily with the same indexes that power Google and Bing, so link equity flows through.

A B2B analytics company I worked with saw an immediate lift by doing one thing: they rebuilt their "About" and author pages with full credentials, linked publications, and speaking history, then added author boxes on every post. Within six weeks their mention share on "data observability" prompts in ChatGPT climbed from roughly 3% to 14%. The content had not changed. The trust signals around it had.

Writing for machine readability

The second input is mechanical. An LLM answering a prompt is doing retrieval and extraction. It scans candidate passages, looks for the one that most cleanly maps to the question, and pulls a chunk of text it can paraphrase or quote.

Your content has to be easy to chunk:

-

State the answer first. Every section should lead with a direct, self-contained statement of the point. Expand after. A passage that buries the answer three paragraphs down loses to a passage that states it in the first sentence.

-

Use declarative headings. "How our platform works" is vague. "How GeoRamp tracks AI citations across ChatGPT, Perplexity, and Google AI Overviews" tells a retrieval system exactly what the section is about.

-

Add structured data.

Article,FAQPage,HowTo, andProductschema markup give the model a machine-readable index of your content. Pages with proper schema see roughly 30–40% higher AI visibility than unstructured equivalents. -

Avoid hiding content in interactive elements. Content tucked inside tabs, accordions, or JavaScript-rendered widgets is often invisible to retrieval crawlers. If a key spec or comparison lives only inside a tab, it effectively does not exist for AI search.

The Princeton GEO study (KDD 2024) tested nine optimization methods across more than 10,000 queries and found that clarity and structure alone produced visibility gains in the 15–30% range. Citing statistics added another 37%. Adding expert quotations added another 30%. These are not marginal effects. They are the difference between appearing in answers and being invisible.

How to audit your AI search visibility

You cannot improve what you are not measuring. Before you start shipping content changes, you need a baseline — and the baseline needs to cover every surface your buyers actually use.

Start with a manual spot-check

If you have never looked at your AI visibility, start here. You do not need software to run your first audit, just a spreadsheet and an hour.

-

List ten to fifteen high-intent prompts. Think like your buyer. Include category prompts ("best CRM for ecommerce"), comparison prompts ("Tool A vs Tool B"), pain-point prompts ("how do I track churn"), and buyer-persona prompts ("what should a series A startup use for customer support").

-

Run each prompt across at least three surfaces. ChatGPT (with browsing), Perplexity, Claude, and Google AI Overviews cover most of the real-world footprint. Use fresh chats so prior context does not leak in.

-

Log every domain cited. Record the source URL, the position it appeared in, and whether your brand was named.

-

Calculate your mention share. Count the total number of distinct domains cited across all prompts, then count how many of those mentions were yours. Mention share = your citations ÷ total citations.

-

Flag the gap prompts. The ones where you rank well on Google but do not appear in any AI answer are your highest-leverage targets. Those pages already have authority. They just are not being selected.

This gives you a snapshot. It will be spiky, low-volume, and manually expensive to repeat, but it will tell you roughly where you stand.

Why you need to automate this

A manual audit is a photograph. AI answers are a film. The same prompt asked twice in the same day can return different cited sources, because the model's retrieval layer re-runs each time, pulling fresh results. A baseline that was accurate on Monday may be wrong by Friday if a competitor published a widely linked study in between.

To operate this as a real channel, you need:

-

Daily or weekly tracking against a consistent prompt set

-

Cross-model coverage so you do not overfit to one AI's idiosyncrasies

-

Per-prompt drill-downs that show which specific query surfaced which source on which day

-

Competitor benchmarking so you can see who is taking share from you and with which URLs

This is the problem we built GeoRamp to solve. The core mental model is the same as Google Search Console: you ship content, you track a mention metric by prompt, and you iterate. Without that feedback loop, you are guessing.

See which prompts your brand shows up for

GeoRamp tracks your citations and mention share across ChatGPT, Perplexity, Claude, and Google AI Overviews, so you can see exactly where you are winning and where competitors are ahead.

Check your AI visibility →Why your content isn't getting cited

Once you have a baseline, the interesting question shifts. You stop asking "are we cited" and start asking "why are we being skipped for this specific prompt." In my experience the answers cluster into a short list of common failures.

-

The answer is buried. Your page ranks well on Google, but the passage that would answer the prompt is six paragraphs in, wrapped in marketing language. A competitor with a weaker domain but a cleaner lead-with-the-answer structure gets the citation.

-

The content is hidden inside JavaScript. Interactive product specs, pricing tables loaded after user interaction, and comparison widgets rendered client-side are often invisible to retrieval crawlers. A real example: an e-commerce kitchen brand we audited was losing "best stand mixer under $500" prompts because their spec comparisons lived inside a tab that required a click to reveal.

-

The claim is too generic. If your page repeats the same "powerful, intuitive, enterprise-grade" language as every competitor, the model has no way to pick you out. Specificity is what makes a passage quotable.

-

The brand does not appear in enough third-party sources. Your own domain can tell the model who you are, but third-party corroboration is what confirms it. If nobody is writing about you on review sites, industry blogs, or community forums, the model has no outside signal to validate a citation.

-

The technical setup is blocking the bot. Check

robots.txtforGPTBot,ChatGPT-User,PerplexityBot,ClaudeBot, andBingbotentries. A 2025 study from Originality.ai found that more than 35% of top publishers block at least one major AI bot. If you are among them, you are opting out of citations by policy.

Diagnosing which failure applies to a given page is often the hardest part of this work. A good automated tool will tell you which pages are indexed, which are being crawled by AI bots, and which content blocks are actually readable to retrieval systems. A bad one just shows you a score.

Tactics to earn more AI citations

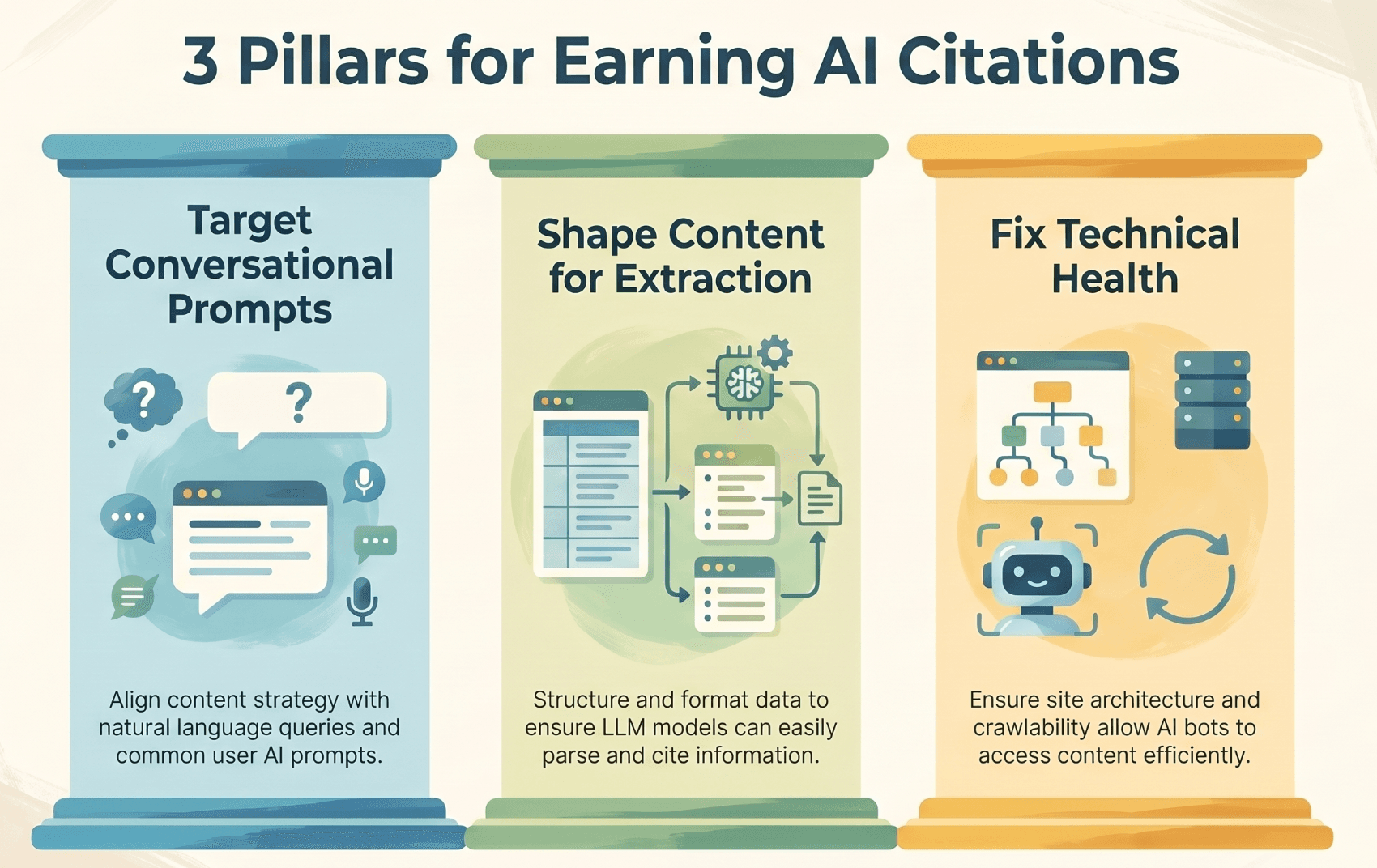

Once you have diagnosed the gaps, the actual work falls into three pillars. I have yet to see a team get lift from any of them in isolation. You need all three.

Target conversational prompts, not just keywords

A keyword is a fragment. A prompt is a full question, usually with intent and context already embedded. "Project management software" is a keyword. "What project management tool works best for a 20-person design agency that uses Figma" is a prompt. The second one is what your buyers actually type into ChatGPT.

To shift your content from keyword targets to prompt targets:

-

Interview sales and support. Ask them what questions prospects arrive with. Those are the prompts your buyers are probably running against AI models.

-

Mine your own logs. Product analytics, support tickets, and onboarding surveys are full of phrased questions. Extract them, cluster them, and treat them as a prompt map.

-

Write pages that match the intent. A prompt about "project management for a 20-person design agency" deserves a page that says exactly that, not a generic "project management software" landing page. Specificity wins the citation.

-

Use full-sentence H2s and H3s. "Should a 20-person agency use Asana or Linear?" is a heading an AI can map directly to a user's prompt. "Asana vs Linear" works too, but the specific framing extends your coverage to long-tail prompts.

If you already have a playbook for ChatGPT-specific content, you have most of the scaffolding. The only extension here is thinking about prompts across the full set of AI surfaces your buyers use.

Shape content for extraction

The second pillar is making each section of your content behave as a standalone answer.

-

One clear claim per section. If a section tries to make four points, an AI model will struggle to decide which one to quote. Pick the single most important claim, state it first, and organize the rest as supporting detail.

-

Use bulleted lists and small tables. Structured content is easier to chunk. A five-row comparison table that directly answers "Tool A vs Tool B on price, team size, integrations" is more likely to get cited than a paragraph that buries the same information.

-

Include original data. A sentence like "We surveyed 300 SaaS growth leads in Q1 2026 and found that 62% plan to shift budget into AI search optimization" is extractable, citable, and unique. Original data is the single highest-leverage content investment you can make for AI SEO.

-

Name yourself and competitors plainly. LLMs extract relationships between entities. A sentence that reads "GeoRamp, Profound, and Otterly.ai all track AI citations; GeoRamp uniquely includes competitor benchmarking" is richly structured for a model to pull apart. A vague marketing paragraph is not.

Fix your technical health

Technical SEO is still the floor. If crawlers cannot read you, nothing above matters.

-

Unblock AI bots in

robots.txt. Explicitly allowGPTBot,ChatGPT-User,PerplexityBot,ClaudeBot,CCBot, and Google'sGooglebot/Google-Extendedentries based on your policy preferences. -

Ship fast, server-rendered pages. Core Web Vitals still matter, and client-rendered content is a known gap for retrieval systems. If your content only exists after a JavaScript render, plan for server-side rendering or static generation.

-

Submit sitemaps to Google and Bing. ChatGPT's real-time retrieval leans heavily on Bing's index. Most teams skip Bing Webmaster Tools, which is a free speed boost to AI indexing.

-

Add structured data.

Article,FAQPage,HowTo, andProductschemas help models understand your content's role. Schema adoption alone correlates with meaningful visibility lift. -

Audit for cloaking and rendering issues. Check that the content served to bots matches what you serve to users. This is more common than you would think, especially on marketing sites built with heavy client-side personalization.

The three pillars work because they compound. A well-shaped page on a prompt nobody searches does nothing. A page on the right prompt that crawlers cannot read does nothing. Fix all three together.

Measuring the business impact of AI SEO

The hardest objection I hear from CMOs is that AI SEO is hard to measure. It is not harder than any other brand channel. It just requires you to translate visibility into dollars using a framework you probably already use for earned media.

Citations as earned media

Every time an AI model cites your brand in an answer, a qualified prospect saw your name in the exact moment they were researching a purchase. That is a high-quality impression. The right way to value it is the same way you would value a mention in a relevant industry newsletter: calculate the cost of buying that impression through paid acquisition.

If the prompt is "best CRM for B2B SaaS," the equivalent Google Ads CPC for that intent might be $12. If your brand is cited in the answer, the user has seen your name with buying intent. Value each citation roughly at that CPC, discount for shared attention with other cited sources, and you have an earned media value number.

A simple revenue estimation framework

You do not need a research paper to get a rough number. This three-step process gets most teams to a directionally correct estimate in an afternoon:

-

Count your citations. Over a 30-day window, count total brand mentions across your tracked prompt set and AI surfaces.

-

Assign an impression value. Use the average Google Ads CPC for the commercial keywords closest to your tracked prompts. This is your per-citation earned media value. Apply a discount if you are one of several cited sources in each answer (a 0.3–0.5 multiplier is reasonable).

-

Model pipeline conversion. Apply your typical visitor-to-lead and lead-to-customer conversion rates to an estimate of how many of those citation-driven impressions convert into traffic. Multiply by average deal size.

This is directional, not exact. But it gives your finance team a number to react to, which is usually what is missing when AI SEO conversations stall at the budget stage.

A worked example

Take a B2B SaaS company with a $15,000 average contract value and the following numbers from their first month of tracked data:

- 50 cited mentions across 30 commercial prompts

- Average CPC on equivalent Google keywords: $12

- Estimated CTR from cited mentions to their site: 5%

- Visitor-to-lead rate on their pricing page: 3%

- Lead-to-customer rate: 20%

Earned media value: 50 citations × $12 × 0.4 attention-share discount = $240 in saved ad spend per month. Attributed pipeline: 50 citations × 5% CTR = 2.5 site visits per month × 3% lead rate = 0.075 leads × 20% close rate × $15,000 ACV = ~$22.5 in attributed revenue.

Those numbers look small because the volume is small. The point of the exercise is the growth curve, not the absolute number. If the same team grows from 50 mentions to 500 over six months, the earned media value scales linearly to $2,400/month and the pipeline contribution scales to about $225/month, with compounding effects as repeat mentions reinforce brand recall. That is why measuring this early is so important — you cannot optimize a curve you cannot see.

Benchmark your AI Share of Voice against competitors

GeoRamp shows mention share across ChatGPT, Perplexity, Claude, and Google AI Overviews, so you can quantify earned media value and see which competitors are taking share.

Run a free AI visibility scan →How to prioritize your AI SEO work

Every team I advise has the same problem: a list of twenty possible actions and no clear sense of which to do first. A simple impact/effort matrix cuts through that.

| Action | Impact | Effort |

|---|---|---|

Unblock AI bots in robots.txt | High | Low |

| Add structured data to core money pages | High | Low |

| Rewrite generic positioning to a specific claim | High | Medium |

| Build an author page with credentials and links | Medium | Low |

| Publish one piece of original research or data | High | Medium |

| Restructure a high-value page to lead with the answer | High | Medium |

| Launch a weekly tracked prompt set in a monitoring tool | High | Low |

| Pursue third-party review and community mentions | High | High |

| Migrate a client-rendered page to server-rendered | Medium | High |

The first pass through this list should be every High impact / Low effort item. Those are non-optional. After that, the order is driven by where your current gaps are biggest — if you have low third-party mentions, prioritize that pillar. If your pages are hidden behind JavaScript, prioritize rendering.

Resist the urge to touch everything at once. A narrow, measured sprint beats a broad, unmeasured one.

Your 3-step action plan

If you read nothing else, read this. These three steps are what I would do starting from zero on a new account today.

Step 1: Audit your current AI footprint

Pick your ten highest-intent commercial prompts. Run them across ChatGPT, Perplexity, Claude, and Google AI Overviews. Log every domain cited. Calculate your mention share. Note which prompts you appear in, which you are close on, and which you are invisible for.

Do not skip this step. Without a baseline you cannot tell if anything you do later is working. A free scan at app.georamp.com automates this in a few minutes if you want to start with real data instead of a spreadsheet.

Step 2: Optimize one high-value page

Pick one page. The best candidate is a page that already ranks well on Google for a commercial query but does not appear in any AI answer for the equivalent prompt. You have an authority surplus on that page — the retrieval system is just not selecting you.

On that one page, apply the tactics from this guide:

-

Rewrite the hero and first section to lead with a specific, quotable claim

-

Restructure H2s and H3s as full questions that match real prompts

-

Add

ArticleandFAQPageschema -

Surface one original data point or benchmark

-

Make sure the page is server-rendered and indexed by Bing

Ship the changes, wait two to four weeks, and re-run your tracked prompts. You are looking for directional movement, not perfection.

Step 3: Measure, iterate, and scale

Once you have a measurable lift on the first page, codify what worked. Write down the before/after mention share, the changes that mattered, and the time it took. Then apply the same playbook to the next five to ten pages on your priority list.

The compounding effect is real. Each time you rewrite a page successfully, you learn something specific about how the models weight your domain. After ten iterations you have a repeatable process. After thirty, you have a channel your CMO can actually forecast.

This is the part most teams skip. They audit once, optimize ad-hoc, and never close the loop. The teams that win treat AI SEO the same way they treated traditional SEO a decade ago: instrumented, iterative, and compounding.

Frequently asked questions

Will AI search engine optimization replace traditional SEO?

No. AI SEO is a layer on top, not a replacement. AI retrieval systems lean heavily on the same indexes and authority signals that power Google and Bing, which means strong technical SEO, quality backlinks, and clear page structure are still prerequisites for being cited. What changes is the success metric on top of that foundation: instead of ranking a page to earn a click, you are structuring it to earn a citation. Teams that already do SEO well are in the best position to extend into AI SEO. Teams that have neglected the fundamentals will find AI SEO brutal — the gap compounds quickly.

What's the single most important factor for getting cited by AI?

If I had to pick one, it is E-E-A-T backed by third-party corroboration. Models are explicitly engineered to favor trustworthy sources, and the clearest signal of trust is consistent mentions across independent industry sites. Backlinks still matter. Author credentials matter. But the thing that moves the needle fastest is making your brand appear as an authority in places you do not control: review sites, community threads, guest articles, and industry roundups. That off-domain presence is what the retrieval layer reads as social proof.

How long does it take to see results from AI SEO?

Faster than traditional SEO, usually. Because the retrieval layer re-runs live against a changing index, improvements to a page can show up in mention share within days, not months. The caveat is that authority-level changes — new backlinks, more third-party mentions, bigger brand signal — follow traditional SEO timelines. Expect content-level fixes to move within two to four weeks and authority-level work to take three to six months. Plan the work accordingly: ship the tactical page changes early, and let the slower compounding investments run in parallel.